Originally published by our sister publication Anesthesiology News

Case Western Reserve University

Consultant Anesthesiologist

Department of General Anesthesiology

Cleveland Clinic

NOVEMBER 21, 2024

Originally published by our sister publication Anesthesiology News

Originally published by our sister publication Anesthesiology News

The field of artificial intelligence continues to expand at an unprecedented pace, pushing the boundaries of what machines can achieve.1 In November 2022, OpenAI, a prominent AI research and development organization, introduced two groundbreaking AI products: a “chatbot” known as ChatGPT (release 3.5) and an AI image generator known as DALL•E 2.2 In May 2024, OpenAI introduced a combined successor to these products known as GPT-4o. This landmark product and its many recent AI cousins have garnered significant attention for their remarkable capabilities and potential applications in education, commerce, medicine and industry.2

ChatGPT represents a significant leap forward in conversational AI. The GPT part stands for “generative pre-trained transformer,” and this software technology enables natural language interactions with machines in truly remarkable ways. ChatGPT allows human users to engage in meaningful conversations with an AI companion with well-crafted, intelligent responses free of grammatical errors and misspelled words. ChatGPT has demonstrated its proficiency in various applications, including customer support, video content creation, language translation and creative writing.

GPT-4o has also brought about a revolution in the realm of visual AI. By understanding the semantics and context of the provided prompts, GPT-4o also can synthesize intricate and imaginative visual content, as illustrated in the original artwork examples provided later in this article.

This article investigates some potential applications of such AI chatbots, with discussions concerning their promise to transform patient care, medical research and clinical decision making. It is expected that ChatGPT and other chatbot implementations will soon be able to facilitate doctor–patient interactions, streamline administrative tasks and support medical professionals in making informed decisions. Although the clinically focused exploration of AI offered in this article concentrates on the new capabilities introduced by ChatGPT, many of the considerations apply equally well to many recently launched competing AI products, such as pi.ai, an especially friendly chatbot, or You.com.

We have now entered a new technological era where AI has the power to augment human intelligence, enable new discoveries and redefine the boundaries of what is possible in medicine. By responsibly embracing these advances, we can pave the way for a future where AI seamlessly collaborates with healthcare workers, hopefully bringing about improved patient outcomes and a more efficient healthcare ecosystem—that’s the goal. The reality is likely to be more nuanced, with profound questions to be addressed as AI applications continue to evolve.

Getting Started

To get started with ChatGPT, first obtain access to these services by creating an account with OpenAI; details are available at OpenAI.com. Once you have installed the necessary software, follow the supplied documentation on how to best format requests (“prompts”). Experiment by trying out different requests and observing the obtained responses. For GPT-4o, you can generate text based on a given prompt, as well as generate images based on textual descriptions. It’s worth noting that both ChatGPT and its AI cousins are powerful tools, but they should be used responsibly. (OpenAI provides guidelines on its website for ethical AI use.) To better illustrate these AI tools, I have included several AI-authored examples. Use cases illustrated include a discussion on the dangers of AI (Figure 1) and on the limitations of using ChatGPT for medical advice (Figure 2). Other examples of how ChatGPT might be helpful concern the construction of quality questions (prompts), illustrated in Figure 3 and discussed further below, while Figure 4 provides an example of ChatGPT providing completely wrong information, sometimes called a “hallucination.” In another example, I asked ChatGPT to prepare a computer program to calculate body mass index using the Python programming language (Figure 5).

Prompt Engineering

The most important “secret” to getting useful commentary from AI chatbots like ChatGPT is asking the right question. The best responses are obtained when the prompt question is carefully crafted, as a lawyer would do in a courtroom cross-examination. The technical term for this art is “prompt engineering,” and understanding its principles can help enormously in obtaining well-crafted, well-organized responses. In other words, prompt engineering is a technique used in language models like ChatGPT to generate specific outputs or responses to user inputs, and this is done by creating specialized prompts that guide the model toward producing the desired output.

The process of prompt engineering thus involves carefully constructing prompts that include relevant keywords, phrases or other cues that help guide the model toward generating the desired output. As crafted prompts are designed to elicit a specific response or output from the model, the choice of words and phrases used in the prompt can have a significant impact on the quality and accuracy of the generated responses.

Prompt engineering can be used to improve the accuracy and relevance of the responses generated by the AI language model. For example, a prompt can be designed to provide specific information or context that can help the model generate a more accurate and relevant response. Similarly, prompts can be used to guide the model toward generating responses that match a particular tone, style or level of formality. Figure 3 gives examples provided by ChatGPT.

Medical Applications of AI

Medical AI is changing the delivery of clinical care in numerous ways, such as assisting in drug discovery, advising on clinical drug selection or interpreting radiographic images.3-8 The importance of these developments is highlighted by the launching of several medical journals that focus on AI in medicine, such as Artificial Intelligence in Medicine, Journal of Medical Artificial Intelligence, IEEE Journal of Biomedical and Health Informatics, and NEJM AI.

One particularly useful AI application in anesthesiology would be an advanced tool that reviews the preoperative patient’s medical record and generates a detailed synopsis. Although some electronic health records offer basic versions of this feature, future AI enhancements would go further by recognizing specific circumstances relevant to anesthesiologists. For instance, AI could automatically search for conditions like pseudocholinesterase deficiency, review previous surgical histories to anticipate potential complications and tailor postoperative pain management plans based on past effectiveness and the risk of opioid dependence. Such an AI application would also intelligently disregard outdated concerns, such as a cardiac echocardiogram from two years ago showing an ejection fraction of 17% in a patient with a recent heart transplant, or an old report of severe aortic stenosis in a patient with a new aortic valve.

Additional general medical capabilities might include conversations about how best to work up a patient. To illustrate this, Figure 6 provides a sample ChatGPT commentary with suggestions on working up a hypothetical anemic woman, whereas Figure 7 shows the result when ChatGPT is asked to generate the text portion for an instructional slideshow for a talk on medical ethics.

ChatGPT can even contribute to the medical humanities. Figure 8 shows ChatGPT as a playwright contributing to developing a theatrical production centering on a dying woman. Figure 9 shows that ChatGPT is not a bad poet either.

Examples of AI-based medical apps that will certainly be developed for wide use will be an AI app to manage type 1 and type 2 diabetes (different algorithms for each) or an app to select an antibiotic for hospital use. Such apps may be free, like the popular Linux operating system, or cost money to download, like most Microsoft products. The FDA has guidance regarding medical software products used clinically.9 Some apps will be sponsored by Big Pharma, just like the books, videos and brochures they had distributed to doctors decades ago. Another app many clinicians might like is a perioperative pain management system based on specific consensus guidelines. In many cases, published clinical guidelines can be readily adapted for such purposes. Note that many or most of these apps will draw on rule-based AI.

Applications of AI to Anesthesiology

One of the earliest AI tools for anesthesiology was a program called ATTENDING developed in 1983 at Yale University by Perry Miller.10 This was a computer system to critique anesthetic plans by evaluating a patient’s medical problems, planned surgical procedures and proposed anesthetic methods, providing a risk–benefit analysis and alternative approaches. Over the four decades since that landmark effort, many more AI developments related to anesthesiology have been described or proposed. What follows is a sampling of these developments.

First, AI can potentially be used to help with the planning of anesthesia by identifying optimal protocols based on patient characteristics, medical history and procedural requirements. By developing personalized anesthesia plans, AI will help ensure quality anesthesia delivery while minimizing side effects. For instance, an AI planning advisor might list necessary equipment and drugs for upcoming cases or recommend double treatment options for patients at high risk for postoperative nausea and vomiting. Such systems can be rule-based, like using procedural sedation guidelines or awake intubation management flowcharts.

Second, AI models are potentially useful in predicting clinical outcomes, including the likelihood of postoperative complications and need for extended recovery times in the PACU or ICU.11-14 For example, the online ACS NSQIP (American College of Surgeons National Surgical Quality Improvement Program) Surgical Risk Calculator estimates the risk for death or adverse outcomes after surgery, although it does not consider factors such as the surgeon’s experience.15

Third, AI-powered tools can potentially help with real-time clinical documentation, reducing the cognitive load on anesthesia providers and improving the quality of anesthetic records. This will likely ensure more accurate and comprehensive documentation, freeing up providers to focus on patient care.

Fourth, AI tools will be developed to help with clinical workflow and quality matters, potentially improving anesthesia safety practices. For instance, existing rule-based AI can remind providers to administer the next dose of antibiotics or alert users when a drug infusion is nearing completion.

Fifth, AI algorithms will be able to recommend personalized drug dosages based on patient data such as age, weight and medical history. AI-based closed-loop anesthesia delivery systems, incorporating EEG monitoring, also will adjust drug infusion rates in real time, potentially optimizing drug delivery and minimizing suboptimal dosing.16

AI will also eventually assist in the real-time monitoring of physiologic parameters, providing early detection of complications or deviations from the anesthesia plan. Automated alerts will prompt anesthesiologists to take corrective actions in response to specific patient conditions, such as hypotension and bradycardia after spinal anesthesia or the onset of sepsis.17

Finally, other applications include AI-assisted identification of sonoanatomy for ultrasound-guided regional anesthesia,18 identification of acid–base disorders from blood gas data19 and automated cardiac rhythm analysis.20

Is ChatGPT Trustworthy?

ChatGPT is like a genius friend who is super eager to help you and happy to answer any question you might have on almost any topic: from summarizing Plato’s “The Allegory of the Cave” to helping you write a computer program (Figure 5). ChatGPT can assist you instantly at no cost (a free version is currently available). ChatGPT has an astonishing intellect and is wonderfully polite. There is almost nothing ChatGPT cannot comment on intelligently, eloquently and with grace.

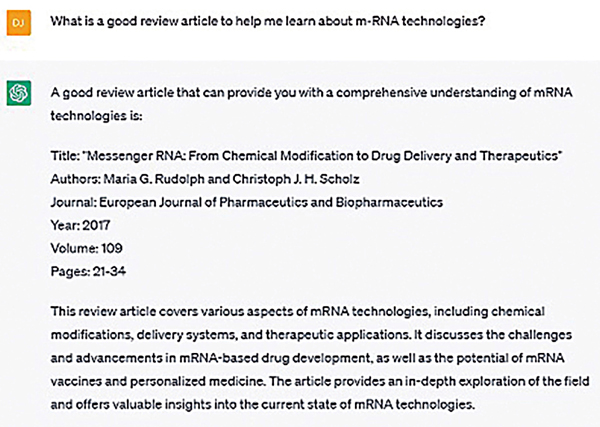

But there is a problem: Your genius friend is not fully trustworthy. Sometimes ChatGPT provides incorrect information, kind of like an honors student taking an oral exam who just misses getting an A on their exam, because while they did a pretty good job in answering, some misunderstandings became apparent during the discussion. The bottom line is this: Verify everything, especially literature citations (Figure 4).

Exploring the ‘Dark Side’

Technology has a dark side, such as spam emails and cyberbullying. As we enter the rapidly evolving “Age of AI,” we should evaluate how new AI developments will affect our world and plan for possible negative consequences. Some thinkers are concerned that AI proliferating without limits could end humanity as we know it.

An individual asking ChatGPT for technical details on the various synthesis pathways for making sarin, carfentanil or other dangerous molecules is an obvious “red flag” that warrants potential action by authorities to ensure the safety of the population. But which action? Should OpenAI automatically forward (via an AI filter, of course) worrisome ChatGPT queries to the FBI and other agencies concerned with national security? And what constitutes “worrisome”? What about the author researching bioweapons in preparation for writing a spy novel where James Bond saves the world from the deployment of deadly terrorist toxins? These issues illustrate the special challenges of developing corporate and government policies regarding AI deployment.

As one policy example, some controversial thinkers suggest that a tax on AI and robotic services will be needed to help support individuals who have become unemployed as a result of these very services. Such policy suggestions are sometimes made in conjunction with the idea of providing all adult citizens with a universal basic income.

Another difficult issue concerns essays submitted by high school and university students. As you can see from the examples provided herein, AI chatbots can produce commentaries of sufficient quality to be genuinely indistinguishable from human-generated material, generating truly original text that can pass plagiarism detection software.

Another concern with AI chatbots is “hallucinations.”21 Users of AI chatbots like ChatGPT more than occasionally find that the responses provided contain “careless errors” (to use a term frequently used by one of my teachers). An example is when you and ChatGPT are conversing on a particular scientific or clinical matter, and you ask for specific citations to the scientific literature. Sometimes, however, the citations provided end up as duds when entered into PubMed.gov; the citations were actually a “hallucination” with no real-world existence (Figure 4). Other examples of hallucinogenic errors might include mistaking the nationality of a prominent person or providing invented information, such as getting a national capital wrong.

Finally, some experts are concerned that AI might be harmful to human health in some situations. For example, Federspiel et al22 worry that this might happen due to “the control and manipulation of people,” as exemplified by China’s Social Credit System. Other concerns they identify are the effects of AI on unemployment and the use of lethal autonomous weapons systems driven by AI.

Giving Credit

When an AI chatbot has contributed substantially to a formal talk or scientific manuscript, this fact should be acknowledged, in some manner, much as one acknowledges colleagues who comment critically on early drafts of a manuscript. “This slide made with ChatGPT assistance” is a little textbox I occasionally add to some of my PowerPoint slides. And, of course, I used ChatGPT to help with producing the text for this article, mostly to get suggestions to see how the text might be improved.

Conclusion

The above exploration of the remarkable capabilities introduced by AI chatbots offers truly transformative potential in clinical applications. These advanced AI tools have demonstrated their prowess in enhancing learning, problem-solving and creative content generation. As we continue to harness the power of these new technologies, it is imperative to strike a balance between their extraordinary capabilities and ethical considerations to ensure they are applied responsibly.

References

Copyright © 2024 McMahon Publishing, 545 West 45th Street, New York, NY 10036. Printed in the USA. All rights reserved, including the right of reproduction, in whole or in part, in any form.

Download to read this article in PDF document:![]() Discovering the AI Capabilities Introduced by ChatGPT: A Clinical Exploration

Discovering the AI Capabilities Introduced by ChatGPT: A Clinical Exploration